Whereas Washington’s breakup with Anthropic uncovered the whole lack of any coherent guidelines governing synthetic intelligence, a bipartisan coalition of thinkers has assembled one thing the federal government has to date declined to supply: a framework for what accountable AI growth ought to truly appear to be.

The Pro-Human Declaration was finalized earlier than final week’s Pentagon-Anthropic standoff, however the collision of the 2 occasions wasn’t misplaced on anybody concerned.

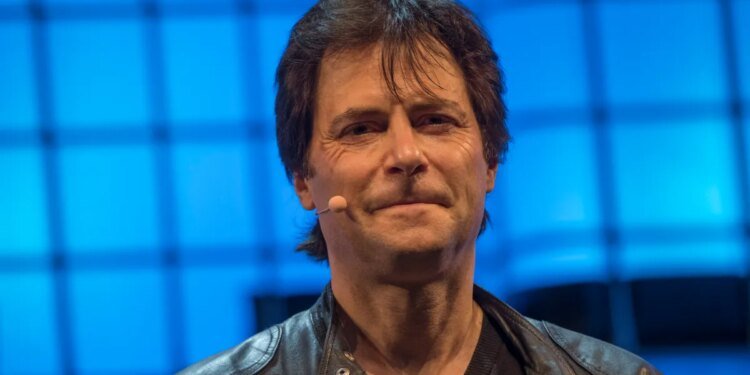

“There’s one thing fairly outstanding that has occurred in America simply within the final 4 months,” mentioned Max Tegmark, the MIT physicist and AI researcher who helped manage the trouble, in conversation with this editor. “Polling out of the blue [is showing] that 95% of all Individuals oppose an unregulated race to superintelligence.”

The newly revealed doc, signed by lots of of specialists, former officers, and public figures, opens with the no-nonsense statement that humanity is at a fork within the highway. One path, which the declaration calls “the race to switch,” results in people being supplanted first as staff, then as decision-makers, as energy accrues to unaccountable establishments and their machines. The opposite results in AI that massively expands human potential.

The latter state of affairs is dependent upon 5 key pillars: maintaining people in cost, avoiding the focus of energy, defending the human expertise, preserving particular person liberty, and holding AI firms legally accountable. Amongst its extra muscular provisions is an outright prohibition on superintelligence growth till there’s scientific consensus it may be achieved safely and real democratic buy-in; necessary off-switches on highly effective programs; and a ban on architectures which are able to self-replication, autonomous self-improvement, or resistance to shutdown.

The declaration’s launch coincides with a interval that makes its urgency far simpler to understand. On the final Friday in February, Protection Secretary Pete Hegseth designated Anthropic — whose AI already runs on labeled army platforms — a “provide chain danger” after the corporate refused to grant the Pentagon limitless use of its expertise, a label ordinarily reserved for corporations with ties to China. Hours later, OpenAI reduce its personal cope with the Protection Division, one which authorized specialists say can be tough to implement in any significant means. What all of it laid naked is how pricey Congressional inaction on AI has change into.

As Dean Ball, a senior fellow on the Basis for American Innovation, told The New York Times afterward, “This isn’t just a few dispute over a contract. That is the primary dialog we’ve got had as a rustic about management over AI programs.”

Techcrunch occasion

San Francisco, CA

|

October 13-15, 2026

Tegmark reached for an analogy that most individuals can perceive after we spoke. “You by no means have to fret that some drug firm goes to launch another drug that causes large hurt earlier than folks have discovered how one can make it protected,” he mentioned, “as a result of the FDA gained’t enable them to launch something till it’s protected sufficient.”

Washington turf wars not often generate the type of public strain that adjustments legal guidelines. As an alternative, Tegmark sees little one security because the strain level most probably to crack the present deadlock. Certainly, the declaration requires necessary pre-deployment testing of AI merchandise — significantly chatbots and companion apps aimed toward youthful customers — masking dangers together with elevated suicidal ideation, exacerbation of psychological well being circumstances, and emotional manipulation.

“If some creepy previous man is texting an 11-year-old pretending to be a younger woman and making an attempt to influence this boy to commit suicide, the man can go to jail for that,” Tegmark mentioned. “We have already got legal guidelines. It’s unlawful. So why is it completely different if a machine does it?”

He believes that when the precept of pre-release testing is established for kids’s merchandise, the scope will widen virtually inevitably. “Individuals will come alongside and be like — let’s add a couple of different necessities. Possibly we must also check that this may’t assist terrorists make bioweapons. Possibly we should always check to make it possible for superintelligence doesn’t have the flexibility to overthrow the U.S. authorities.”

It’s no small factor that former Trump advisor Steve Bannon and Susan Rice, President Obama’s Nationwide Safety Advisor, have signed the identical doc — together with former Joint Chiefs Chairman Mike Mullen and progressive religion leaders.

“What they agree on, in fact, is that they’re all human,” says Tegmark. “If it’s going to come back down as to whether we would like a future for people or a future for machines, in fact they’re going to be on the identical facet.”

Thanks for studying! Be part of our neighborhood at Spectator Daily