Relating to coding, peer suggestions is essential for catching bugs early, sustaining consistency throughout a codebase, and bettering total software program high quality.

The rise of “vibe coding” — utilizing AI instruments that take directions given in plain language and rapidly generate massive quantities of code — has modified how builders work. Whereas these instruments have sped up improvement, they’ve additionally launched new bugs, safety dangers, and poorly understood code.

Anthropic’s answer is an AI reviewer designed to catch bugs earlier than they make it into the software program’s codebase. The brand new product, known as Code Evaluate, launched Monday in Claude Code.

“We’ve seen quite a lot of development in Claude Code, particularly inside the enterprise, and one of many questions that we hold getting from enterprise leaders is: Now that Claude Code is placing up a bunch of pull requests, how do I guarantee that these get reviewed in an environment friendly method?” Cat Wu, Anthropic’s head of product, informed TechCrunch.

Pull requests are a mechanism that builders use to submit code adjustments for evaluation earlier than these adjustments make it into the software program. Wu stated Claude Code has dramatically elevated code output, which has elevated pull request opinions which have brought about a bottleneck to delivery code.

“Code Evaluate is our reply to that,” Wu stated.

Anthropic’s launch of Code Evaluate — arriving first to Claude for Groups and Claude for Enterprise prospects in analysis preview — comes at a pivotal second for the corporate.

Techcrunch occasion

San Francisco, CA

|

October 13-15, 2026

On Monday, Anthropic filed two lawsuits in opposition to the Division of Protection in response to the company’s designation of Anthropic as a provide chain danger. The dispute will seemingly see Anthropic leaning extra closely on its booming enterprise enterprise, which has seen subscriptions quadruple for the reason that begin of the 12 months. Claude Code’s run-rate income has surpassed $2.5 billion since launch, based on the corporate.

“This product could be very a lot focused in the direction of our bigger scale enterprise customers, so corporations like Uber, Salesforce, Accenture, who already use Claude Code and now need assist with the sheer quantity of [pull requests] that it’s serving to produce,” Wu stated.

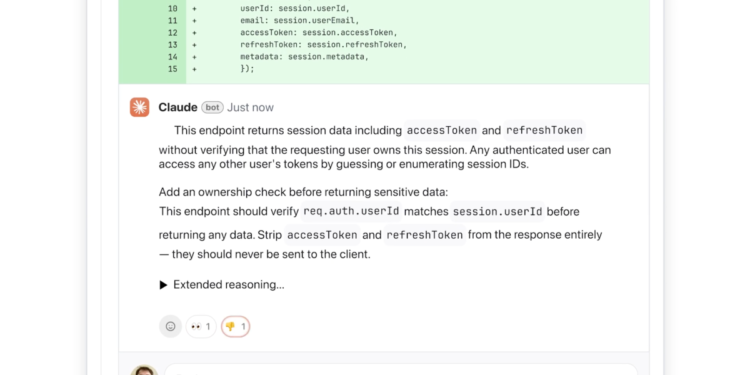

She added that developer leads can activate Code Evaluate to run on default for each engineer on the workforce. As soon as enabled, it integrates with GitHub and routinely analyzes pull requests, leaving feedback immediately on the code explaining potential points and recommended fixes.

The main target is on fixing logical errors over fashion, Wu stated.

“That is actually necessary as a result of quite a lot of builders have seen AI automated suggestions earlier than, they usually get aggravated when it’s not instantly actionable,” Wu stated. “We determined we’re going to focus purely on logic errors. This fashion we’re catching the best precedence issues to repair.”

The AI explains its reasoning step-by-step, outlining what it thinks the difficulty is, why it could be problematic, and the way it can probably be fastened. The system will label the severity of points utilizing colours: crimson for highest severity, yellow for potential issues price reviewing, and purple for points tied to preexisting code or historic bugs.

Wu stated it does this rapidly and effectively by counting on a number of brokers working in parallel, with every agent analyzing the codebase from a special perspective or dimension. A closing agent aggregates and ranks the findings, eradicating duplicates and prioritizing what’s most necessary.

The instrument offers a light-weight safety evaluation, and engineering leads can customise further checks based mostly on inner greatest practices. Wu stated Anthropic’s extra just lately launched Claude Code Security offers a deeper safety evaluation.

The multi-agent structure means this generally is a resource-intensive product, Wu stated. Just like different AI providers, pricing is token-based, and the associated fee varies relying on code complexity — although Wu estimated every evaluation would value $15 to $25 on common. She added that it’s a premium expertise, and a needed one as AI instruments generate increasingly code.

“[Code Review] is one thing that’s coming from an insane quantity of market pull,” Wu stated. “As engineers develop with Claude Code, they’re seeing the friction to creating a brand new characteristic [decrease], they usually’re seeing a a lot greater demand for code evaluation. So we’re hopeful that with this, we’ll allow enterprises to construct sooner than they ever might earlier than, and with a lot fewer bugs than they ever had earlier than.”

Thanks for studying! Be a part of our neighborhood at Spectator Daily