Flash floods are among the many deadliest climate occasions on the earth, killing greater than 5,000 folks every year. They’re additionally among the many most tough to foretell. However Google thinks it has cracked that drawback in an unlikely approach — by studying the information.

Whereas people have assembled quite a lot of climate information, flash floods are too short-lived and localized to be measured comprehensively, the best way the temperature and even river flows are monitored over time. That information hole implies that deep studying fashions, that are more and more able to forecasting the climate, aren’t in a position to predict flash floods.

To unravel that drawback, Google researchers used Gemini — Google’s giant language mannequin — to type by way of 5 million information articles from all over the world, isolating reviews of two.6 million completely different floods, and turning these reviews into a geo-tagged time series dubbed “Groundsource.” It’s the primary time that the corporate has used language fashions for this sort of work, in response to Gila Loike, a Google Analysis product supervisor. The analysis and information set was shared publicly Thursday morning.

With Groundsource as a real-world baseline, the researchers trained a model constructed on a Lengthy Quick-Time period Reminiscence (LSTM) neural community to ingest climate international forecasts and generate the likelihood of flash floods in a given space.

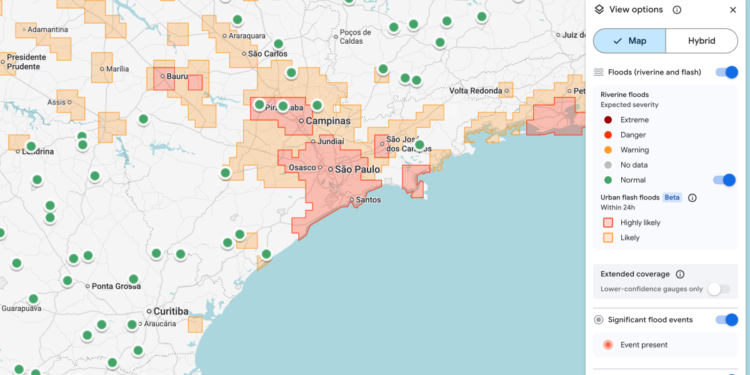

Google’s flash flood forecasting mannequin is now highlighting dangers for city areas in 150 nations on the corporate’s Flood Hub platform, and sharing its information with emergency response businesses all over the world. António José Beleza, an emergency response official on the Southern African Improvement Group who trialed the forecasting mannequin with Google, mentioned it helped his group reply to floods extra rapidly.

There are nonetheless limitations to the mannequin. For one, it’s pretty low decision, figuring out danger throughout 20-square-kilometer areas. And it’s not as exact because the US Nationwide Climate Service’s flood alert system, partly as a result of Google’s mannequin doesn’t incorporate native radar information, which permits real-time monitoring of precipitation.

A part of the purpose, although, is that the challenge was designed to work in locations the place native governments can’t afford to spend money on costly weather-sensing infrastructure or don’t have in depth information of meteorological information.

Techcrunch occasion

San Francisco, CA

|

October 13-15, 2026

“As a result of we’re aggregating hundreds of thousands of reviews, the Groundsource information set really helps rebalance the map,” Juliet Rothenberg, a program supervisor on Google’s Resilience staff, instructed reporters this week. “It permits us to extrapolate to different areas the place there isn’t as a lot data.”

Rothenberg mentioned the staff hopes that utilizing LLMs to develop quantitative information units from written, qualitative sources might be utilized to efforts to constructing information units about different ephemeral-but-important-to-forecast phenomena, like warmth waves and dust slides.

Marshall Moutenot, the CEO of Upstream Tech, an organization that makes use of comparable deep studying fashions to forecast river flows for patrons like hydropower corporations, mentioned Google’s contribution is a part of a rising effort to assemble information for deep learning-based climate forecasting fashions. Moutenot co-founded dynamical.org, a bunch curating a set of machine learning-ready climate information for researchers and startups.

“Information shortage is among the most tough challenges in geophysics,” Moutenot mentioned. “Concurrently, there’s an excessive amount of Earth information, after which while you need to consider in opposition to fact, there’s not sufficient. This was a very artistic strategy to get that information.”

Thanks for studying! Be a part of our group at Spectator Daily